As organizations move from experimenting with AI to embedding it into operations, concerns about security, privacy, and risk inevitably rise. Headlines about data leakage, hallucinations, and unintended actions reinforce a common belief: that AI introduces a fundamentally new and uncontrollable category of risk.

AI does introduce new risks, but most failures are not the result of sophisticated attacks or runaway systems. They stem from a simpler issue: governance has not kept pace with capability. Organizations deploy AI faster than they adapt their controls, then react when something breaks.

The result is often a blunt response of banning tools, restricting access, or halting initiatives entirely. Ironically, these reactions tend to reduce transparency and push AI use further underground.

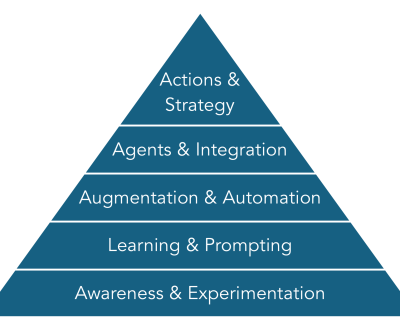

A more effective approach is to treat AI security not as a one-time decision, but as a progressive capability that evolves alongside adoption.

Early Stages: Enabling Safe Experimentation

In the earliest stages of AI use, risk is primarily about exposure, not autonomy. Employees experiment with public tools, often without clear guidance on what data is appropriate to share.

At this point, the most valuable controls are not technical sophistication but clarity:

- Clear acceptable-use guidance

- Explicit rules about sensitive, client, or regulated data

- Strong identity controls such as MFA

- Basic logging and audit visibility

These guardrails serve a dual purpose. They reduce obvious risk, and they signal that AI use is permitted — but expected to be thoughtful.

Organizations that fail to establish even minimal guidance early often face a false choice later: tolerate uncontrolled use or impose restrictive bans. Neither is sustainable. At the early stages it is too easy to share information with ChatGPT, forgetting that the spreadsheet with company sales that you asked for some analysis, is also used for training. In experimental mode, the free version is typically used and it clearly states your data is public!

Mid Stages: Operational Accountability

As AI moves into task-level augmentation, the nature of risk changes. The concern is no longer just what data is shared, but how AI outputs influence work.

At this stage, AI may be categorizing tickets, summarizing meetings, drafting responses, or updating systems. Errors may not be dramatic, but they can compound quietly.

Effective controls here focus on accountability:

- Clear ownership of AI-assisted outputs

- Human-in-the-loop review for customer-facing or financial actions

- Role-based access to AI capabilities

- Data classification and retention policies

Crucially, organizations must decide where AI recommendations end and human responsibility begins. Without that boundary, errors become difficult to trace and trust erodes quickly.

This is often where leaders realize that AI governance is not just a security function, but a management one.

Advanced Stages: Policy-Driven AI

When AI begins to orchestrate workflows or support decisions, informal controls are no longer sufficient. At this level, AI systems can initiate actions, trigger downstream effects, and operate continuously.

Here, guardrails must be embedded into the system itself:

- Policy-based approvals for AI-triggered actions

- Separation between recommendation and execution

- Monitoring for drift, anomalies, and silent failures

- Escalation paths when confidence thresholds are exceeded

The goal is not to eliminate risk — which is impossible — but to ensure that AI behaves predictably within defined constraints.

Organizations that succeed at this stage treat AI agents much like junior staff: empowered to act within clear authority, supervised, and auditable.

Why Progressive Security Works

A common misconception is that strong governance slows AI adoption. In practice, the opposite is true. Organizations with clear guardrails move faster because employees know what is allowed, leaders trust the outputs, and incidents are easier to manage when they occur.

Progressive security also avoids a critical failure mode: over-engineering controls too early. Imposing advanced governance on basic experimentation often stifles learning without meaningfully reducing risk.

The key is proportionality. Controls should match the level of autonomy and impact AI has at each stage.

From Constraint to Capability

Ultimately, AI security is not about restricting tools. It is about preserving judgment, accountability, and trust as machines take on more work.

Organizations that frame guardrails as constraints tend to oscillate between enthusiasm and retrenchment. Those that frame them as capabilities build confidence and sustain momentum.

The question leaders should be asking is not, “How do we secure AI?” but:

“What level of autonomy are we comfortable with, and what controls does that require?”

AI adoption will continue, with or without perfect policies. It is still important to ask “How do we secure AI?” The organizations that thrive will be those that recognize security not as an afterthought, but as an integral part of learning how to work with intelligent systems.

About us and this blog

Kobelt Development Inc. is an information systems support company which provides top quality and consistent client care.

Contact Us

At KDI we offer excellent customer service to clients all the while making their lives easier and simpler through the use of information technology.

Subscribe to our newsletter!

Site content

Recent Posts

- Securing AI: Why Guardrails Must Grow with Capability February 27, 2026

- The Stages of AI Adoption: Why Most Implementations Fail Early February 17, 2026

- AI is everywhere – wisdom is not! February 10, 2026